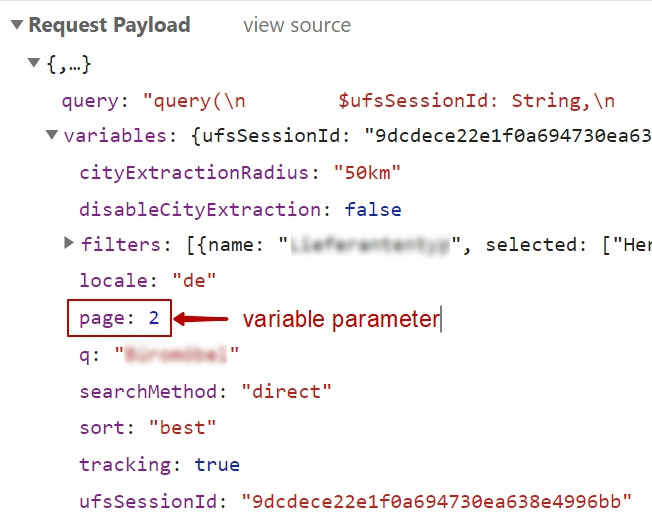

Lately I needed to scrape some data that are dynamically loaded by “Load more” button. A website JavaScript invokes XHR (or Ajax request) to fetch a next data portion. So, the need was to re-run those XHR with some POST parameters as variables.

So, how to make it in Node.js?

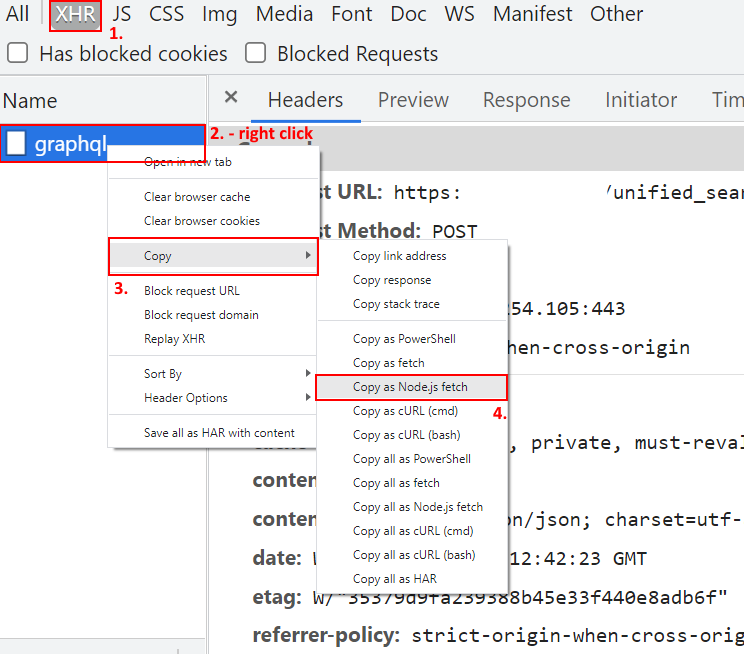

1. Replay XHR and fetch it from Dev. tools

We copy the XHR from Developer tools (F12) as a Node.js fetch() function call:

The result will be something like the following:

fetch("https://www.xxx.com/graphql", {

"headers": {

"accept": "application/json, text/plain, */*",

"accept-language": "en-US,en;q=0.9,ru;q=0.8",

"content-type": "application/json",

"sec-ch-ua": "\" Not;A Brand\";v=\"99\", \"Google Chrome\";v=\"91\", \"Chromium\";v=\"91\"",

"sec-ch-ua-mobile": "?0",

"sec-fetch-dest": "empty",

"sec-fetch-mode": "cors",

"sec-fetch-site": "same-origin",

"x-original-url": "https://www.xxx.com/search/page/2?q=yyy",

"cookie": "wlw_client_id=rBEAAmC2BOKcHADzAxVeAg==;..."

},

"referrer": "https://www.xxx.com/search/page/2?q=B%C3%BCrom%C3%B6bel&supplierTypes=Hersteller",

"referrerPolicy": "origin-when-cross-origin",

"body": "{\"query\":\"query( $ufsSessionId: String, $searchMethod: String, $q: String, $conceptSlug: String, $citySlug: String, $filters: [FilterInput!], $page: Int, $sort: String, $tracking: Boolean, $disableCityExtraction: Boolean, $cityExtractionRadius: String } }\",\"variables\":{\"ufsSessionId\":\"9dcdece22e1f0a694730ea638e4996bb\",\"searchMethod\":\"direct\",\"locale\":\"de\",\"q\":\"Büromöbel\",\"filters\":[{\"name\":\"Lieferantentyp\",\"selected\":[\"Hersteller\"]}],\"page\":2,\"sort\":\"best\",\"tracking\":true,\"disableCityExtraction\":false,\"cityExtractionRadius\":\"50km\"}}",

"method": "POST",

"mode": "cors"

});2. The full code

Wrap up that fetch() call with an asynchronous function. We also change the parameter value using the wrapper function input variable, named i. The data is written into a file using a file stream.

async function getdata(i){

fetch("https://www.xxx.com/graphql", {

"headers": {

"accept": "application/json, text/plain, */*"

},

"variables ":{ "page":"+ i + "}",

"method": "POST",

"mode": "cors"

}).then(response => response.json())

.then((value) => {

let companies = value.data.search.companies;

//console.log( companies );

let names=[]; // we fetch names only

console.log( '\nPage: ', i);

for(c of companies){

console.log( c['name']);

names.push( c['name']);

}

names.forEach( function (item, index) {

stream.write(item + "\n");

});

});

}The whole code you might load below.