<?php

$base_url = "https://openapi.octoparse.com";

$token_url = $base_url . '/token';

$post =[

'username' => 'igorsavinkin',

'password' => '<xxxxxx>',

'grant_type' => 'password'

];

$payload = json_encode($post);

$headers = [

'Content-Type: application/json' ,

'Content-Length: ' . strlen($payload)

];

$timeout = 30;

$ch_upload = curl_init();

curl_setopt($ch_upload, CURLOPT_URL, $token_url);

if ($headers) {

curl_setopt($ch_upload, CURLOPT_HTTPHEADER, $headers);

}

curl_setopt($ch_upload, CURLOPT_POST, true);

curl_setopt($ch_upload, CURLOPT_POSTFIELDS, $payload /*http_build_query($post)*/ );

curl_setopt($ch_upload, CURLOPT_RETURNTRANSFER, true);

curl_setopt($ch_upload, CURLOPT_CONNECTTIMEOUT, $timeout);

$response = curl_exec($ch_upload);

if (curl_errno($ch_upload)) {

echo 'Curl Error: ' . curl_error($ch);

}

curl_close($ch_upload);

//echo 'Response length: ', strlen($response);

echo $response ;

$fp = fopen('octoparse-api-token.json', 'w') ;

fwrite($fp, $response );

fclose($fp);Tag: Curl

CURL request into Curl PHP code

Recently I needed to transform the CURL request into the PHP Curl code, binary data and compressed option having been involved. See the query itself:

curl 'https://terraswap-graph.terra.dev/graphql'

-H 'Accept-Encoding: gzip, deflate, br'

-H 'Content-Type: application/json'

-H 'Accept: application/json'

-H 'Connection: keep-alive'

-H 'DNT: 1'

-H 'Origin: https://terraswap-graph.terra.dev'

--data-binary '{"query":"{\n pairs {\n pairAddress\n latestLiquidityUST\n token0 {\n tokenAddress\n symbol\n }\n token1 {\n tokenAddress\n symbol\n }\n commissionAPR\n volume24h {\n volumeUST\n }\n }\n}\n"}'

--compressedThe DOMXPath class is a convenient and popular means to parse HTML content with XPath.

After I’ve done a simple PHP/cURL scraper using Regex some have reasonably mentioned a request for a more efficient scrape with XPath. So, instead of parsing the content with Regex, I used DOMXPath class methods.

Web parsing php tools

Almost all developers have faced a parsing data task. Needs can be different – from a product catalog to parsing stock pricing. Parsing is a very popular direction in back-end development; there are specialists creating quality parsers and scrapers. Besides, this theme is very interesting and appeals to the tastes of everyone who enjoys web. Today we review php tools used in parsing web content.

Php Curl download file

We want to show how one can make a Curl download file from a server. See comments in the code as explanations.

// open file descriptor

$fp = fopen ("image.png", 'w+') or die('Unable to write a file');

// file to download

$ch = curl_init('http://scraping.pro/ewd64.png');

// enable SSL if needed

curl_setopt($ch, CURLOPT_SSL_VERIFYPEER, false);

// output to file descriptor

curl_setopt($ch, CURLOPT_FILE, $fp);

curl_setopt($ch, CURLOPT_FOLLOWLOCATION, 1);

// set large timeout to allow curl to run for a longer time

curl_setopt($ch, CURLOPT_TIMEOUT, 1000);

curl_setopt($ch, CURLOPT_USERAGENT, 'any');

// Enable debug output

curl_setopt($ch, CURLOPT_VERBOSE, true);

curl_exec($ch);

curl_close($ch);

fclose($fp);

- Apply a webhook service to request your target data and store them to DB.

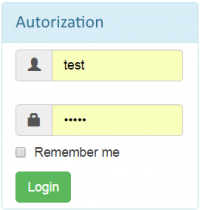

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.

Handling HTTP Cookies in cURL

Most of developers stuck with the cookie handlng in web scraping. Sure it’s a tricky thing and this once has been my stumbling stone too. So here mainly for new scraing engineers i’d like to share of how to handle cookie in web scraping when using PHP. We’ve already done the post on scrape by cURL in PHP, so here we’ll only focus on a cookie side. The cookie is a small piece of data sent from a website and stored in a user’s web browser while the user is browsing that website. So when browser requests a page and along with web content cookie is returned browser does all the dirty job to store cookie and later send them back to server which rendered that web page in following web requests.

Most of developers stuck with the cookie handlng in web scraping. Sure it’s a tricky thing and this once has been my stumbling stone too. So here mainly for new scraing engineers i’d like to share of how to handle cookie in web scraping when using PHP. We’ve already done the post on scrape by cURL in PHP, so here we’ll only focus on a cookie side. The cookie is a small piece of data sent from a website and stored in a user’s web browser while the user is browsing that website. So when browser requests a page and along with web content cookie is returned browser does all the dirty job to store cookie and later send them back to server which rendered that web page in following web requests.

Scraping in PHP with cURL

In this post, I’ll explain how to do a simple web page extraction in PHP using cURL, the ‘Client URL library’.

The curl is a part of libcurl, a library that allows you to connect to servers with many different types of protocols. It supports the http, https and other protocols. This way of getting data from web is more stable with header/cookie/errors process rather than using simple file_get_contents(). If curl() is not installed, you can read here for Win or here for Linux.