- Apply a webhook service to request your target data and store them to DB.

- Webhook service performs a request to your server (where your data are) or static data files (CSV/XSL)

- Your app or script replies [to a hook request] with data to a webhook service. Usually a POST request.

- A webhook service adds that fetched data to your SQL server (db) as a new record.

Alternative:

As an alternative you might implement data POST-ing to your storing script.

(1) POST–ing data to a storing script (php):$url= "/script.php" foreach($data as $key=>$value){ $dataAsString .= $key.'='.$value.'&'; } $dataAsString = rtrim($dataAsString,'&'); //make a connection $ch = curl_init(); curl_setopt($ch,CURLOPT_URL, $url); curl_setopt($ch,CURLOPT_POST, count($data)); curl_setopt($ch,CURLOPT_POSTFIELDS,$dataAsString); curl_exec($ch);(2) script.php to get the POST data and store it to SQL server (php):

$db_conn = mysqli_connect("localhost", "user", "password", "db_name"); $stmt = $mysqli->prepare("INSERT INTO table_name (`key`,`value`,`datetime`) VALUES (?, ?, NOW())"); foreach ($_POST as $key => $value) { $stmt->bind_param('sd', $key, $value); $stmt->execute(); } - Make a DB connection in your script.

Config (python):dbs = { "mysql": { "name": "database_name", "host": "127.0.0.1", "user": "root", "pass": "root", } }Connection (python):

import config def __init__(self) self.initialized = False self.conn = None def connect(self): if not self.initialized or self.conn.open == 0: self.conn = MySQLdb.connect(host=config.dbs["mysql"]["host"], user=config.dbs["mysql"]["user"], passwd=config.dbs["mysql"]["pass"], db=config.dbs["mysql"]["name"]) self.initialized = True return self.connActual storing (python):

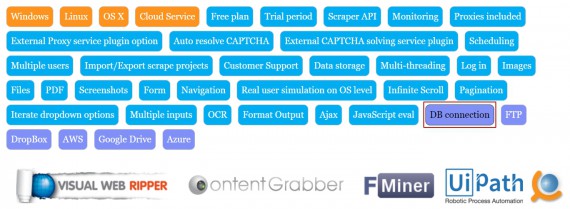

cur = self.connect().cursor() for item in data: cur.execute("INSERT INTO table_name (key, value) VALUES ('{0}', '{1}')".format(item['key'], item['value'])) self.conn.commit() - Use a preinstalled DB connection feature within a scraping software or service, if using any. See this landscape page showing multiple scraping services, where you can filter them out by selecting a needed feature of the web scraping tool. Press DB connection option to see the services implementing it:

- Also, if you’re going to run your own server, you also have the option of not using a web hook at all. Your server can subscribe to the events directly using server-sent events (SSE). Once you have the the data from the events you can do whatever you want with it, including storing it in a SQL database. (source)

Welcome your comments and suggestions.