<?php

$base_url = "https://openapi.octoparse.com";

$token_url = $base_url . '/token';

$post =[

'username' => 'igorsavinkin',

'password' => '<xxxxxx>',

'grant_type' => 'password'

];

$payload = json_encode($post);

$headers = [

'Content-Type: application/json' ,

'Content-Length: ' . strlen($payload)

];

$timeout = 30;

$ch_upload = curl_init();

curl_setopt($ch_upload, CURLOPT_URL, $token_url);

if ($headers) {

curl_setopt($ch_upload, CURLOPT_HTTPHEADER, $headers);

}

curl_setopt($ch_upload, CURLOPT_POST, true);

curl_setopt($ch_upload, CURLOPT_POSTFIELDS, $payload /*http_build_query($post)*/ );

curl_setopt($ch_upload, CURLOPT_RETURNTRANSFER, true);

curl_setopt($ch_upload, CURLOPT_CONNECTTIMEOUT, $timeout);

$response = curl_exec($ch_upload);

if (curl_errno($ch_upload)) {

echo 'Curl Error: ' . curl_error($ch);

}

curl_close($ch_upload);

//echo 'Response length: ', strlen($response);

echo $response ;

$fp = fopen('octoparse-api-token.json', 'w') ;

fwrite($fp, $response );

fclose($fp);Tag: PHP

CURL request into Curl PHP code

Recently I needed to transform the CURL request into the PHP Curl code, binary data and compressed option having been involved. See the query itself:

curl 'https://terraswap-graph.terra.dev/graphql'

-H 'Accept-Encoding: gzip, deflate, br'

-H 'Content-Type: application/json'

-H 'Accept: application/json'

-H 'Connection: keep-alive'

-H 'DNT: 1'

-H 'Origin: https://terraswap-graph.terra.dev'

--data-binary '{"query":"{\n pairs {\n pairAddress\n latestLiquidityUST\n token0 {\n tokenAddress\n symbol\n }\n token1 {\n tokenAddress\n symbol\n }\n commissionAPR\n volume24h {\n volumeUST\n }\n }\n}\n"}'

--compressedRecently I needed to make a bulk insert into db with prepared statement query. The task was to do it so that if one record failed one can rollback all records and return an error. That way no data is affected by faulty code and/or wrong data provided.

The DOMXPath class is a convenient and popular means to parse HTML content with XPath.

After I’ve done a simple PHP/cURL scraper using Regex some have reasonably mentioned a request for a more efficient scrape with XPath. So, instead of parsing the content with Regex, I used DOMXPath class methods.

SQL (Structured Query Language) is a powerful language for working with relational databases, but quite a few people are in fact ignorant of the dark side of this language, which is called SQL-injection. Anyone who knows this language well enough can extract the needed data from your site by means of SQL – unless developers build defenses against SQL-injection, of course. Let’s discuss how to hack data and how to secure your web resource from these kinds of data leaks!

SQL (Structured Query Language) is a powerful language for working with relational databases, but quite a few people are in fact ignorant of the dark side of this language, which is called SQL-injection. Anyone who knows this language well enough can extract the needed data from your site by means of SQL – unless developers build defenses against SQL-injection, of course. Let’s discuss how to hack data and how to secure your web resource from these kinds of data leaks!

Web parsing php tools

Almost all developers have faced a parsing data task. Needs can be different – from a product catalog to parsing stock pricing. Parsing is a very popular direction in back-end development; there are specialists creating quality parsers and scrapers. Besides, this theme is very interesting and appeals to the tastes of everyone who enjoys web. Today we review php tools used in parsing web content.

Sometimes when you are developing a project, it might be necessary to do a parsing of xls documents. To give an example: you do a synchronization between xls worksheets and a website database, and you need to convert xls data to the Mysql and want to do it completely automatically.

If you work with Windows it is simple enough – you just need to use COM objects. However, it is another thing if you work with PHP and need to make it work under the UNIX systems. Fortunately there are many classes and libraries for this purpose. One of them is the class PHPExcel. This library is completely cross-platform, so you will not have problems with portability.

Php Curl download file

We want to show how one can make a Curl download file from a server. See comments in the code as explanations.

// open file descriptor

$fp = fopen ("image.png", 'w+') or die('Unable to write a file');

// file to download

$ch = curl_init('http://scraping.pro/ewd64.png');

// enable SSL if needed

curl_setopt($ch, CURLOPT_SSL_VERIFYPEER, false);

// output to file descriptor

curl_setopt($ch, CURLOPT_FILE, $fp);

curl_setopt($ch, CURLOPT_FOLLOWLOCATION, 1);

// set large timeout to allow curl to run for a longer time

curl_setopt($ch, CURLOPT_TIMEOUT, 1000);

curl_setopt($ch, CURLOPT_USERAGENT, 'any');

// Enable debug output

curl_setopt($ch, CURLOPT_VERBOSE, true);

curl_exec($ch);

curl_close($ch);

fclose($fp);

- Apply a webhook service to request your target data and store them to DB.

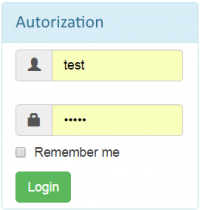

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.