We have already written some posts on CloudScrape, a Copenhagen, Denmark-based web scraping service startup. The service now has a new look and new features for data extraction and business intelligence – with the launch of new name: Dexi.io.

We have already written some posts on CloudScrape, a Copenhagen, Denmark-based web scraping service startup. The service now has a new look and new features for data extraction and business intelligence – with the launch of new name: Dexi.io.

How to insert reCaptcha, video

The following video shows how to insert reCaptcha v2.0 into a php-driven website.

https://www.youtube.com/watch?v=rks41ENvzWY

Thanks to the Webucator for PHP training supplied. Read the original post with the php code.

Recently I got notified of Kimono service finishing its work due to kimono team being joining another project. So many data hunters who were using this prominent free API service are now in search for a good alternative.

Make web page to auto scroll down

Today I want to share with you how to make a web page to automatically scroll down. This is applicable in dealing with social networks pages, business directories (ex. yellow pages) and other auto-upload resources.

Today I want to share with you how to make a web page to automatically scroll down. This is applicable in dealing with social networks pages, business directories (ex. yellow pages) and other auto-upload resources.

Recently I came across an interesting new tool from TheWebMiner called Filter. The Filter is an attempt by TheWebMiner to sort (categorize) indexed websites and deliver them to users as a content filtering service.

Recently I came across an interesting new tool from TheWebMiner called Filter. The Filter is an attempt by TheWebMiner to sort (categorize) indexed websites and deliver them to users as a content filtering service.

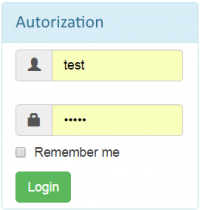

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.

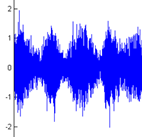

I want to share how I’ve done the audio captcha recognize-er. The audio captcha recognize-er was designed to solve captcha at xbox.com back in 2012.

I want to share how I’ve done the audio captcha recognize-er. The audio captcha recognize-er was designed to solve captcha at xbox.com back in 2012.

Web scraping with JavaScript

Is it possible to scrape an HTML page with JavaScript from inside of a web browser?

To be perfectly honest I wasn’t sure so I decided to try it out.

Full disclaimer here, I didn’t actually succeed. However, it was a great learning experience for me and I think you guys could benefit from seeing what I did and where I went wrong. Who knows, maybe you can take what I’ve done and figure it out for yourself!

I wanna provide you with a nice utility for quick summing of multiple DOM element values. Why? Well, suppose you’ve at a page like this and you want to sum up the total number of hotels in all the countries.

How to parse messy encoded HTML

Let’s suppose you want to extract a price with a currency sign from a web page (eg. £220.00), but its HTML code is this:

which is obviously encoded HTML.