We want to share with our readers about a new testing-ground with reCaptcha v2.0. Since we do R&D of how to solve reCaptcha by web scripts and by captcha breaking services, it’s vital to have a reCaptcha testing ground.

We want to share with our readers about a new testing-ground with reCaptcha v2.0. Since we do R&D of how to solve reCaptcha by web scripts and by captcha breaking services, it’s vital to have a reCaptcha testing ground.

This testing ground is designed according to the How to insert and configure reCaptcha post.

Recently I’ve received a request on how to sum the total hours of a Youtube videos in a search result. I’ve made the simple JS iterator that fetches hours/min/sec from browser html info and sums them up.

Recently I’ve received a request on how to sum the total hours of a Youtube videos in a search result. I’ve made the simple JS iterator that fetches hours/min/sec from browser html info and sums them up. Today I want to share with you how to make a web page to automatically scroll down. This is applicable in dealing with social networks pages, business directories (ex. yellow pages) and other auto-upload resources.

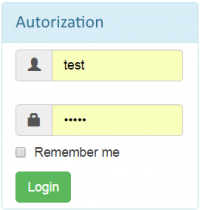

Today I want to share with you how to make a web page to automatically scroll down. This is applicable in dealing with social networks pages, business directories (ex. yellow pages) and other auto-upload resources.  Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.

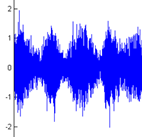

Recently I was challenged to make a script that would authenticate through a bot-proof login from and redirect to a logged in page.  I want to share how I’ve done the audio captcha recognize-er. The audio captcha recognize-er was designed to solve captcha at xbox.com back in 2012.

I want to share how I’ve done the audio captcha recognize-er. The audio captcha recognize-er was designed to solve captcha at xbox.com back in 2012.