![]()

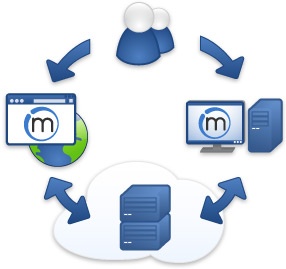

The main difference of the screen scraper software by Mozenda from other scrapers is that it runs your scraping projects (Agents) in clouds. Firstly, you build a project locally using a Windows application and then you execute it on the server.

Overview

There are two parts of Mozenda’s scraper software:

- Mozenda Web Console is a web application that allows you to run your Agents (scrape projects), view & organize your results, and export/publish data extracted (as in the picture left).

- Agent Builder is a Windows application used to build your data extraction project, called Agent (as in the picture right).

The data extraction is processed at the optimized harvesting servers in Mozenda’s Data Centers, thus relieving the client of loading web resources and threats of IP-address banning if detected.

Workflow

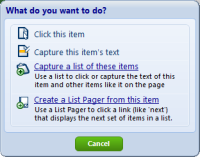

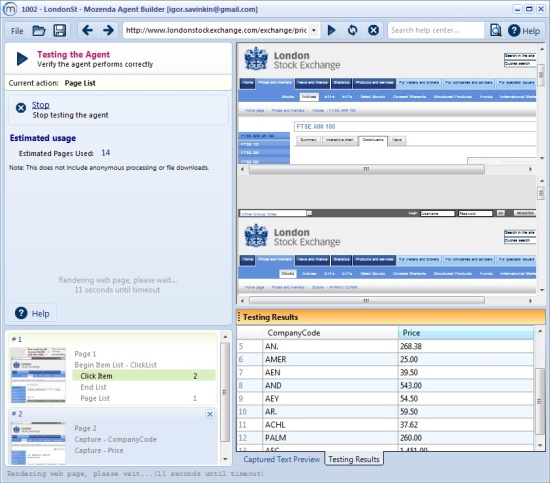

First, in the Agent Builder the target page is loaded in preparation for the data extraction. Then, by clicking, we define the data fields we need. The pop-up windows direct the action to be done with fields chosen. Choosing your fields is simplified by hints given as you work.

The Action bar is comprehensive; it allows you to submit form input and set up for Ajax and iFrames data structures captures. Capturing is very quick. Moreover, Mozenda Data Extraction automatically recognizes common data structures such as price, date, address, phone, and fax. Paging is simple to do. The good thing is that one can refine (transform) the text values. As we have defined the fields for scrape, we may test the Agent and save it for later use. The Agent builder user interface gives a good impression as a whole, especially the data preview, that works even before an Agent undergoes a test.

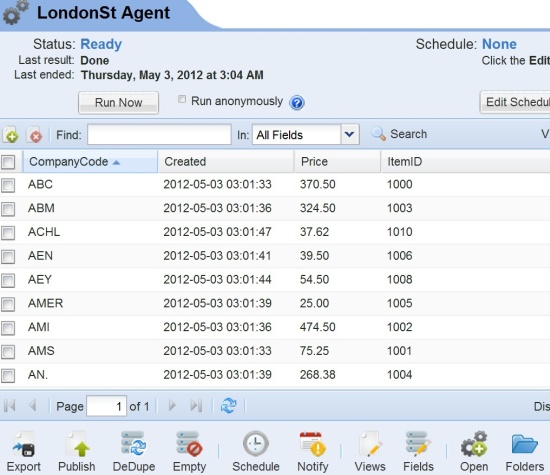

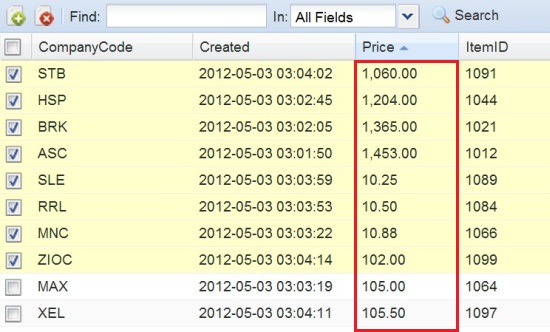

Now it’s time to move to the Web console, where all Agents are displayed. Here we are able to run scrapes (Agents), schedule them, and export scrape results. When your Agent runs, Mozenda screen scraper will download and save the data to a zip file in your account. On the picture below, one can see the results in a customized view along with additional customized column headings, available through the web console. Columns “Created” and “ItemID” were added to this view from a set of built-in column headings.

The web console also provides convenient tools for selecting and sorting items. There is a bit of “a fly in the ointment” to the sorting. In the test case, I sorted items by price and there was an unexpected result. The data were sorted this way since “Price” field is of “text” type, not a “value” type! (See the picture below.)

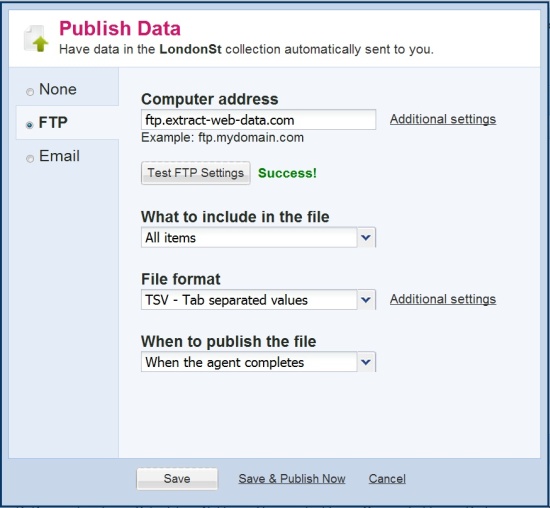

One can export or publish data captured from the web as CSV, TSV, or XML files. This type of report is convenient if you’re working with scheduled scrapes and sending results through FTP to targeted websites.

The new feature of Mozenda is that the scraper manages to extract data from PDF files.

Summary

Mozenda Data Extractor is a good tool that executes your scraper in the clouds. The distributed nature of this web ripper works well for large scale scraping and scheduled and concurrent web harvest. Mozenda’s service for selecting items and appending output files fits good for combination of data from multiple sources. The resulting export and publishing services (including email notifications) are outstanding features of this screen scraper.