Have you ever thought you could make money by knowing how many restaurants there are in a square mile? There is no free lunch, however, if you know how to use Google Maps, you can extract and collect restaurants’ GPS’s and store them in your own database. With that information in hand and some math calculations, you are off to creating a big data online service.

Have you ever thought you could make money by knowing how many restaurants there are in a square mile? There is no free lunch, however, if you know how to use Google Maps, you can extract and collect restaurants’ GPS’s and store them in your own database. With that information in hand and some math calculations, you are off to creating a big data online service.

In this article, we will show you how to quickly extract Google Maps coordinates with a simple and easy method. Let’s dive right into it.

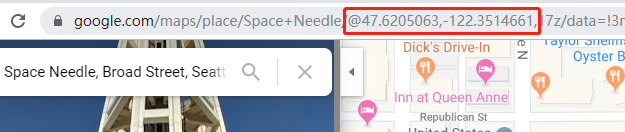

It is tricky to notice that the coordinates actually are hidden inside the URLs. In this case, we need to extract the URL, and use Regular Expression to find the exact matching text string we are looking for. Let’s take the Space Needle landmark in Seattle as an example.

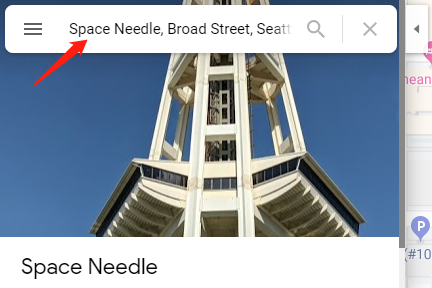

First, Open Google Maps in your browser and type Space Needle in the search bar.

After the page finishes loading, look for coordinates in the URL. The coordinates are located behind the “@” sign.

Next, we can start to extract coordinates from the URL. Octoparse is the best web scraping tool that I have ever encountered, as its intuitive user interface is very easy to pick up, especially for starters (you may download it here).

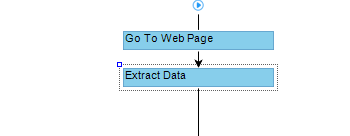

- Build a new task with the Advanced Mode by clicking “+” sign

- Input the following URL into the box: https://www.google.com/maps/place/Space+Needle/@47.6205099,-122.3514661,17z/data=!4m5!3m4!1s0x5490151f4ed5b7f9:0xdb2ba8689ed0920d!8m2!3d47.6205063!4d-122.3492774

- Hit “Save URL” to proceed.

Now we have created a new task successfully. The thing is that Google Maps doesn’t load properly within its built-in browser. Why? It is because Google Maps doesn’t accommodate with the current browser’s user agent. To solve this problem, click the icon. Find the User-agent Switcher. Choose Firefox 45.0 and click save. Octoparse will reload the web page itself.

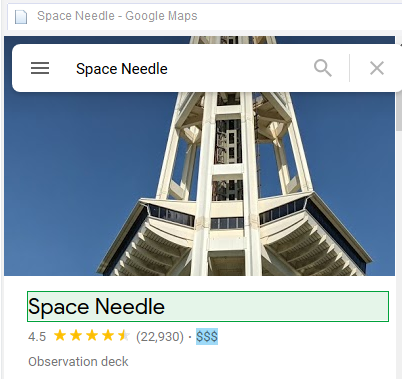

After the web page finishes loading, we are able to start extraction with point-and-click on the built-in browser. Click the name, the Action Tip will bring up the options that you can choose. Select “Extract text of selected element”.

Now you should notice that the extraction has been successfully created and added to the workflow below. We can edit the field name from the setting area on the upper right area by typing in the desired name.

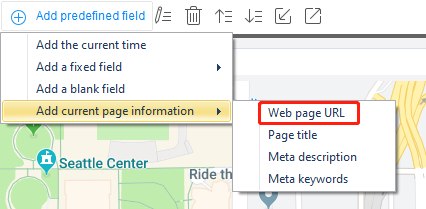

Go to the extraction field and find “Add predefined field” on the bottom. Click to bring up the drop-down menu and select “Add current page information” and select “Web page URL”.

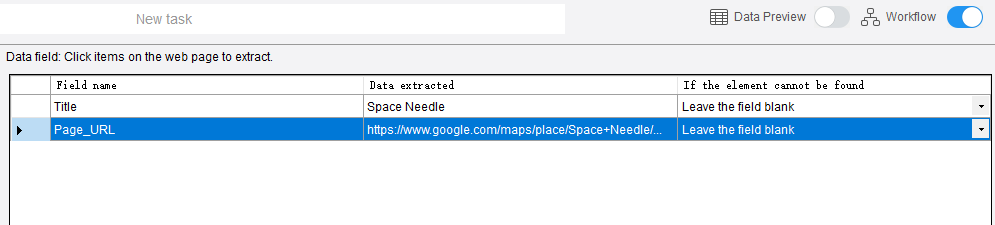

Now the web page URL has been added to the data field successfully. This is great! Of course, we need to edit the URL form to trim off excess and pull the exact coordinates.

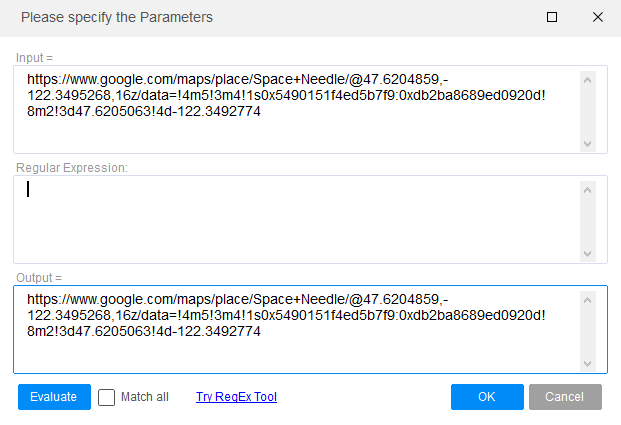

Hit the “Customize” icon (little pencil) at the bottom. Select “Refine extract data”. Then click the![]() “Add step” button. This brings you to a function list where you can choose for data cleaning. In this case, we select Match with regular expression. You should arrive here.

“Add step” button. This brings you to a function list where you can choose for data cleaning. In this case, we select Match with regular expression. You should arrive here.

This allows you to edit the data the way you want by writing Regular Expression. A regular expression is a special text string for describing a search pattern. Considering most people have difficulties writing the expression, we can use the built-in RegEx tool to help us. Click “Try RegEx Tool” button.

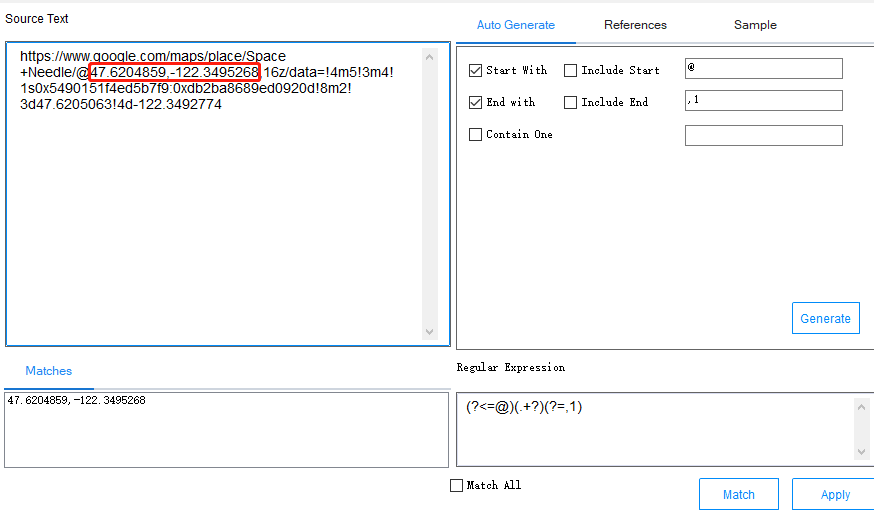

Notice that we want to pull the part after the “@” sign but before the second comma. Check the “Start With” box, and input “@”. This is telling the RegEx that you want the part after the sign. Identically, check “End With” box, and input “, 1”. As there are two commas behind the “@”, we’d better define which comma we want. Simply add the number behind the comma, in this case, add number “1”. This tells the RegEx that you want the part before the comma and number 1. Click the “Generate” button, the regular expression should be able to be seen in the box.

Now just confirm if we have set it properly by clicking the “Match” button. It generates the corresponding expression on the right. Boom! This is exactly what we want. Now go ahead and click “Apply” then click “Ok” to confirm.

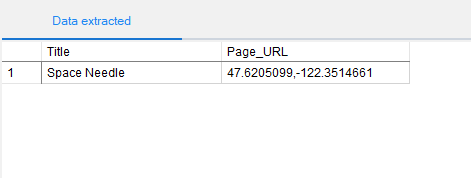

That’s it! You are done. Let’s run the crawler and see if it works. Click “Start Extraction” and pick “Local Extraction”.

Now, what if you have 1000 addresses to lookup? Don’t worry, Octoparse allows you to input over 10,000 URLs when you set up the task. It is as simple as it appears.

If you have any questions about setting up a crawler, please reach out to support@octoparse.com. Octoparse is professionally designed to walk you through the journey from a beginner to a web scraping expert. We are here to help you become a master craftsman in the art of web scraping.

More resources on Octoparse:

https://youtu.be/Rr9mczF6aFw